Robots can enrich humanity - as long as we can keep them under control

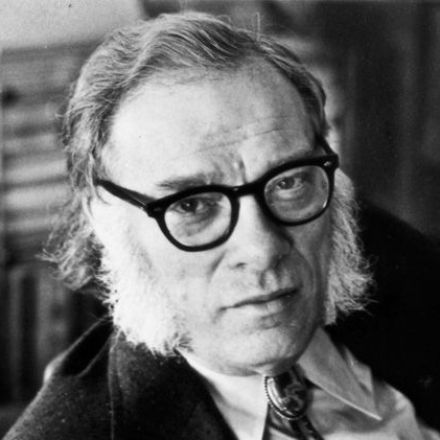

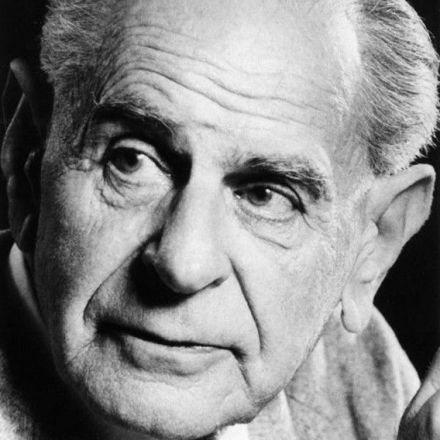

Way back in 1942, the great science fiction writer Isaac Asimov formulated three laws that robots should obey. First, a robot may not injure a human being or, through inaction, allow a human being to come to harm. Second, a robot must obey the orders given it by human beings, except where such orders would conflict with the First Law. Third, a robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Continue Reading

Join the Discussion