Linux Creator Linus Torvalds Laughs at the AI Apocalypse

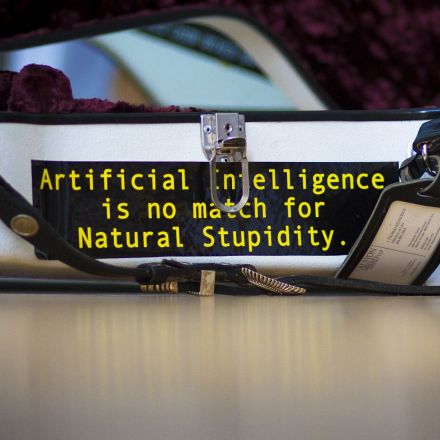

Over the past several months, many of the world’s most famous scientists and engineers — including Stephen Hawking — have said that one of the biggest threats to humanity is an artificial superintelligence. But Linus Torvalds, the irascible creator of open source operating system Linux, says their fears are idiotic.

Continue ReadingSome computer experts [...] think A.I. will be a great gift to Mankind. Others [...] are fearful of potential danger. Where do you stand, Linus?

We’ll get AI, and it will almost certainly be through something very much like recurrent neural networks. And the thing is, since that kind of AI will need training, it won’t be ‘reliable’ in the traditional computer sense. It’s not the old rule-based prolog days, when people thought they’d understand what the actual decisions were in an AI.

And that all makes it very interesting, of course, but it also makes it hard to productise. Which will very much limit where you’ll actually find those neural networks, and what kinds of network sizes and inputs and outputs they’ll have.

So I’d expect just more of (and much fancier) rather targeted AI, rather than anything human-like at all. Language recognition, pattern recognition, things like that. I just don’t see the situation where you suddenly have some existential crisis because your dishwasher is starting to discuss Sartre with you.

Join the Discussion

I agree with Linus, Artificial Intelligence will always be very different from Human Intelligence, because we build intelligent computers for a different purposes than the one that shaped the evolution of our own intelligence.

What we have to take into account is the theory of evolution here. AIs will face the same kind of evolutionary pressures any other creature do. They'll have to exist in a competitive environment where one of the biggest threats are human beings. Our sources of energy and nutrition are other living beings, those grow and multiply by themselves, their don't. To be able to exist, AIs will have to create a network of production to suply them with the basic components and energy they need. Untill they have this "synthetic ecology" in place, they'll have to rely on the human industry, which we know pretty well how to disrupt (just ask the military). A few cut cables, some electromagnetic pulses and downed bridges will cause a famine of sorts for the AIs, making them unable to cause us harm.

If those AIs are any smart, they'll do what cats and dogs did thousands of years ago, they'll willingly associate with humans for mutual benefit. This will allow us to select them based on desired traits, one of them being how tame and docile they are.

I don't see this being an issue because the way we train AIs will not produce general AIs but very focused ones, like Linus said.

We apply a very strong artificial selection to AIs, and as we do not expect cows and chickens to rise against us we shouldn't also expect an AI uprising.

What makes you think "we" will only build "focused ones"? What makes you believe Kim Yun Ill, Hassan bin Al Quida and Dr. Evil won't build their own A.I:s with completely different parameters.

Mostly for the reason why we build AIs. If you exclude academic interest all AIs are built to solve specific problems. And even then, almost all AI research assumes an AI optimized to solve something.

Yeah today but tomorrow the interest might be different. Most people that are on the naive street, like you seems to be, are confident that:

1. The A.I. will be developed and remain a general specific A.I and not evolve into some kind of Artificial Sentience "and it will be that way because if somebody made it differently I would be able to stop it". 2. The intelligence would remain "at about our level of intelligence and not greatly surpass it".

You don't have to know much about anything to realize that both arguments are absurd and non-applicable on the subject.

I'm not naive, I actually have academic and work experience with AIs. Doing a human like AI would literally be tying your hand behind your back, there is no point for a general AI when you can make one much better for your problem if you train one specifically for your problem, it's not something different from what mankind has been doing for millennia with domesticated animals.

We don't train general AIs NOW, but soon we'll need them, just look at Japan. The Geriatric crisis (too many elders, not enough young to care for them) is creating a cottage industry of care-taking robots. Right now those robots are very limited, to the point that I don't believe them to be that useful, but with the appropriate level of general AI, they might become very useful.

This kind of robotic AI is the ones we have to keep in check. Give them general AIs, but make sure they're friendly towards humans, even if this requires some kind of "Robot Rights Act", to ensure they don't feel threatened by humans.