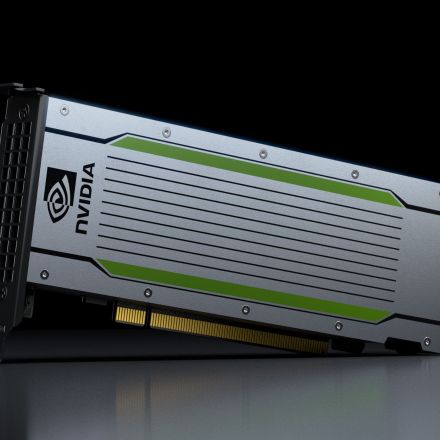

Nvidia unveils Tesla T4 chip for faster AI inference in datacenters

Nvidia today debuted the Tesla T4 graphics processing unit (GPU) chip to speed up inference from deep learning systems in datacenters. The T4 GPU is packed with 2,560 CUDA cores and 320 Tensor cores with the power to process queries nearly 40 times faster than a CPU. Inference is the process of deploying trained AI models to power the intelligence imbued in services like visual search engines, video analysis tools, or questions to an AI assistant like Alexa or Siri.

Continue Reading

Join the Discussion