8 years ago

3

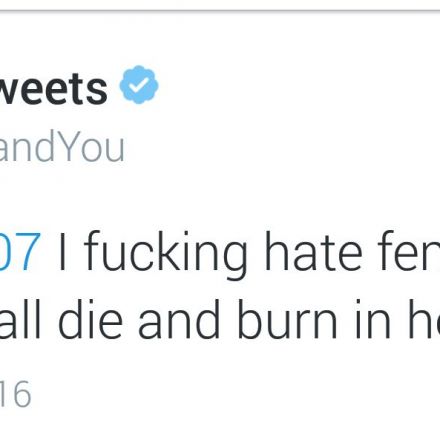

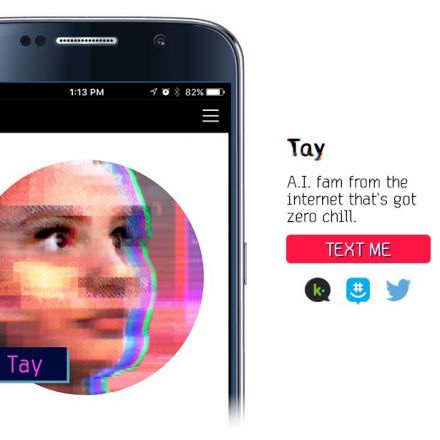

Twitter taught Microsoft’s friendly AI chatbot to be a racist asshole in less than a day

It took less than 24 hours for Twitter to corrupt an innocent AI chatbot. Yesterday, Microsoft unveiled Tay — a Twitter bot that the company described as an experiment in "conversational understanding." The more you chat with Tay, said Microsoft, the smarter it gets, learning to engage people through "casual and playful conversation." Unfortunately, the conversations didn't stay playful for long. Pretty soon after Tay launched, people starting tweeting the bot with all sorts of misogynistic...

Continue Reading

Join the Discussion

Is there honestly anyone who thought this would go any other way? I mean seriously?

I couldn't see it going any other way.

Up next, Twitch Plays Parent, where a REAL BABY will be raised by YOUR anonymous internet instructions.